Your team is shipping faster than ever with AI. And you still don’t know if it’s safe to release.

Release cycles are short. Automation is in place. The testing activity looks huge. Yet, the clear information to make a well-informed decision is missing. Conversations stretch longer. Reports are scattered across various platforms and fail to answer basic questions. That’s when you begin to rely on instinct rather than evidence.

This is where most leaders misread the situation, and bad decisions are made.

More leaders assume:

- More automation will fix testing

- More test cases will improve coverage

- More testers will reduce the time to market

- More AI tools can make testing efficient

If these could fix your testing, it would already be solved by now. In reality, the issue sits elsewhere. The system that is supposed to help teams ground their quality and testing information is no longer doing its job.

Modern test management fixes that gap. It helps the team understand what is happening, especially when things are uncertain and dynamic.

This article breaks down that shift and walks through the seven key features you should expect from modern test management because we are no longer shipping software like the nineties.

Emerging Industry Trends (AI and Quality)

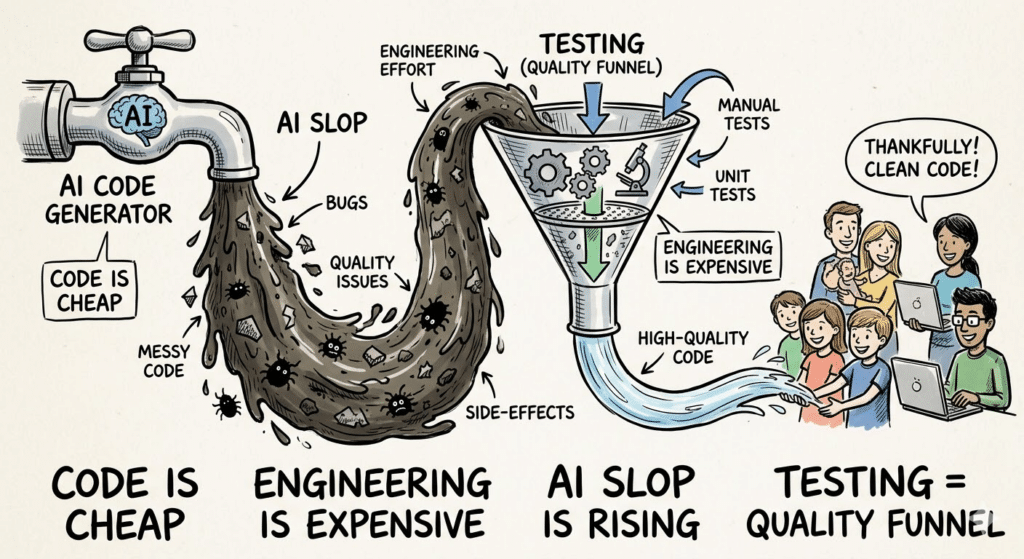

With AI, code is no longer the biggest bottleneck for software development. We are slowly moving from a creation bottleneck to a validation bottleneck. This also creates interesting challenges for testing and quality engineering.

Code is cheap. Engineering is expensive.

With AI assistance, teams are going to build software faster than ever. The real cost now sits in the validation and quality engineering. We are producing code at an exponential pace. Which means you can generate features faster than you can understand them.

So, now you have to do more testing work to truly understand how this code behaves correctly in real, dynamic, and diverse conditions.

AI slop is on the rise

Slop (low-quality AI-generated output) is the most popular word of the year, 2025. That suggests a trend in itself. The danger isn’t that all AI code is wrong. It’s that it looks right enough to pass through your regular system. Problems appear later when:

- Edge cases behave differently or are ignored

- Integrations fail silently

- Data flows break under load

- Production data appears

This creates a hidden risk that traditional test management was not designed for. Testing is no longer just about execution. It is your slop filter. If your system cannot filter the signal from noise, your team ends up busy but uncertain.

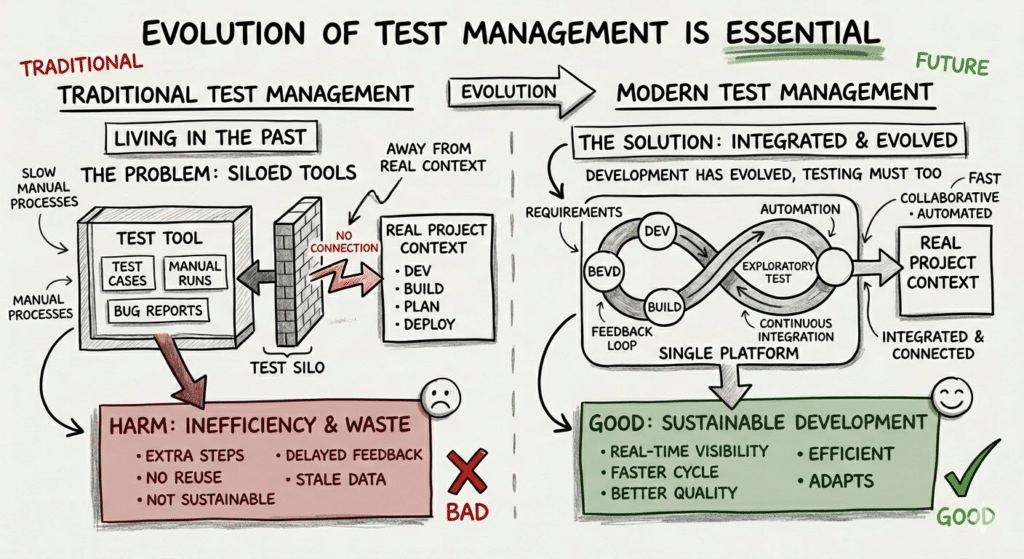

The Problem: Traditional Test Management is Stuck in the Past

Traditional test management is structurally unfit for today and irrelevant for what’s coming ahead. It still assumes a predictable world. The templates, workflows, capabilities, and features haven’t evolved much over the last two decades.

Most traditional test management platforms:

- Forces testing into predefined test cases

- Optimizes for execution, not insights

- Delivers a narrow-focused reporting

- Assumes all testing can be captured upfront with test cases.

That model worked when systems were simpler. But in modern environments, newer patterns emerge.

- Siloed tools across teams, which rots the context

- Repeated effort because no one knows what already exists

- Reports that look complete but fail to guide decisions

- Exploratory testing work remains invisible

- Test assets fragment across versions and environments

- Customizing workflows is needed for custom and changing needs

This is the slow breakdown that prevents QA systems from scaling. Things don’t collapse overnight. They gradually become harder to reason about.

Industry Shift: Modern Test Management

Modern test management systems are built for a different problem. They focus on connecting the entire software lifecycle from:

- Exploration → Learning (not just execution)

- Confirmation → Validation (not just pass/fail status)

- Reporting → Decision-making (not just status of test runs)

They focus on:

- AI-assisted decision-making

- End-to-end testing flow (from exploration to confirmation)

- Integrated context

- Reporting that answers real questions

- Flexibility to match real processes

- Faster time-to-value from signal, not execution volume

Instead of asking, “How much testing did we do?”,

They help answer, “What do we know about quality right now?”

If your test management tool cannot help here, then every new feature is just a layer of polish on the same old broken model.

Here are the capabilities that actually matter for modern test management. I’ll anchor them in a modern and practical tool like PractiTest as an example, and not just theory.

1. AI capabilities that improve focus, not just output

Most teams misunderstand where AI actually helps in testing.

They assume it’s about generating more tests or writing better steps. That’s the visible layer. The real shift is happening deeper in how test management systems can connect with AI agents and share context safely.

MCP: The Foundation for Agent-driven Workflows

This is the part most leaders will miss if they only look at UI features.

Model Context Protocol (MCP) feature allows PractiTest to integrate with any agentic systems in a structured and controlled way. This allows you to integrate your test management with the future possibilities.

In practical terms, PractiTest MCP capability allows AI agents to:

- Retrieve project lists

- Check requirement coverage

- Create a new test

- Link that test to a requirement

- Create test sets

- Add tests to those sets

All of this happens with safe and controlled context sharing, which is critical for enterprise data. This enables AI to actually operate within your boundaries.

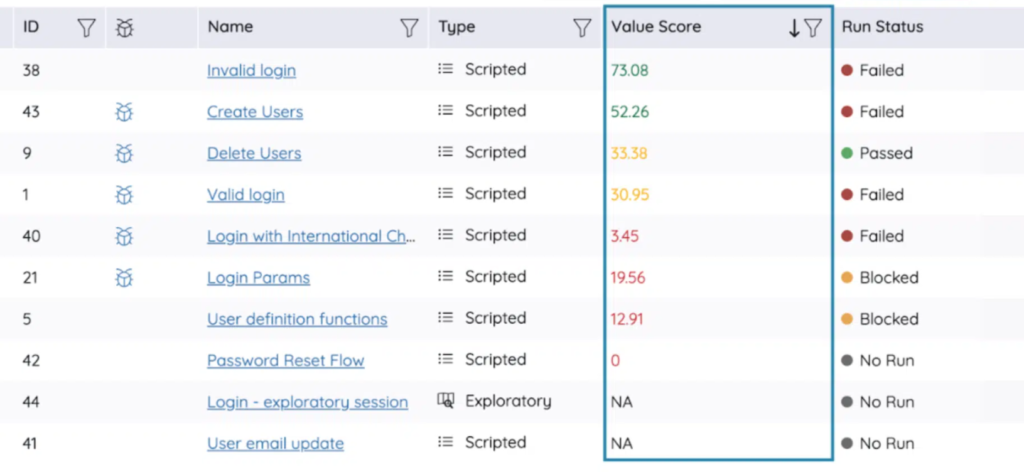

Test Value Score

Most teams are not short on tests. They are short on clarity about which tests still matter.

AI just makes this worse by generating more content fast. This makes it essential to use AI features sapiently. PractiTest takes a more practical route by focusing on usefulness.

One example is the test value score. It evaluates signals such as:

- Status changes across executions

- Linked defects or requirements

- Usage patterns

- How frequently the test is actually used

This helps teams identify which tests contribute to quality and which ones add maintenance overhead.

AI Test Optimization

Over time, duplication creeps in. Different teams create variations of the same test without realizing it. Detecting this overlap helps reduce redundancy, improve reuse, and save time.

PractiTest uses a combination of AI capabilities to:

- Detect similar tests to avoid duplicate coverage

- Detect similar issues in the system to enable you to build on existing items.

- Enable test case & test step reuse

- Refine test cases for clarity and completeness

- Shorten overly verbose tests or expand incomplete ones

2. Exploratory Testing (ET) Support

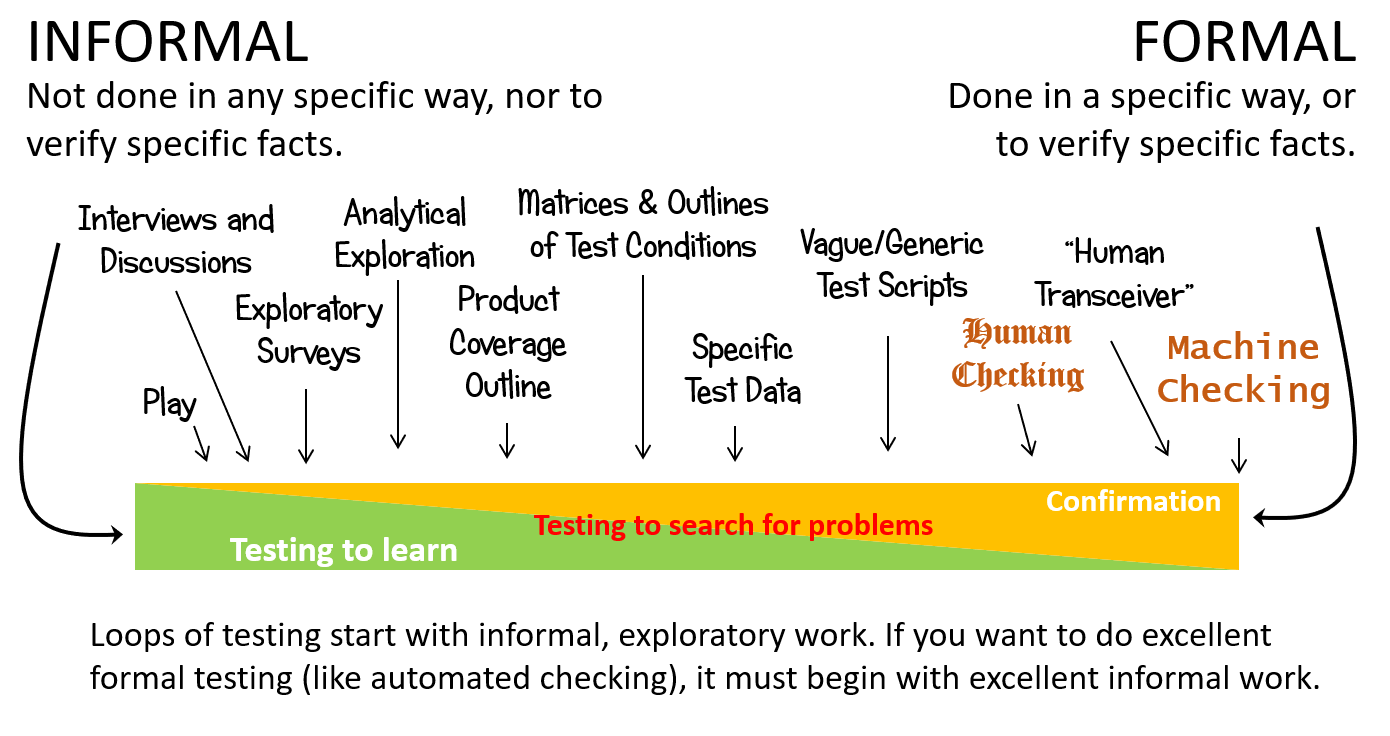

Testing rarely starts with a clean test case.

It begins with exploration and ends with confirmation. A tester navigates a feature, explores it, and improves their understanding of it. Only later does that understanding turn into structured checks.

Traditional test management tools capture only the last step. They are blind to how testing actually happens. The Testing Continuum makes this clear. Testing starts informally. It moves through exploration, questioning, and learning. Only later does it become formal, scripted checking.

Credits: Testing Continuum by Michael Bolton & James Bach (RST)

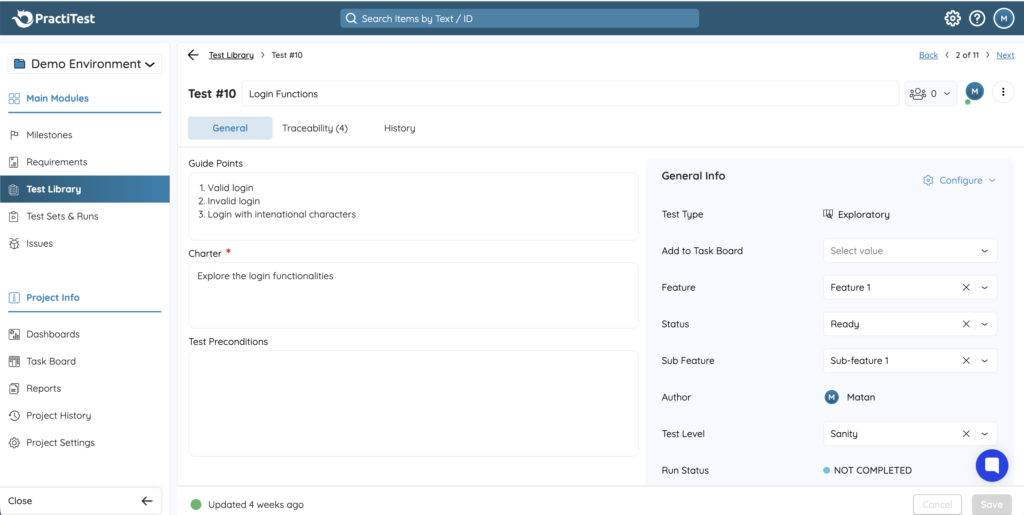

PractiTest supports the full flow. It supports you with informal testing too, with capabilities to:

- Define exploratory charters to guide sessions

- Capture notes and observations during testing

- Link findings to defects or requirements

- Convert insights into structured test cases

With AI and modern software development, exploratory testing is one approach where most critical issues surface. If the system cannot capture that phase, you lose valuable context right from the start.

3. Test Automation Integration

Most software teams have well adopted Test Automation. However, visibility into test automation is still broken. This is a systemic failure across teams.

Teams run automated tests through CI/CD pipelines or on the tester’s local machines, but the results remain isolated.

PractiTest bridges this gap through:

- REST API support via secured API tokens

- Support for popular test automation languages like Python, Java, and C#

- Integration support for popular CI/CD and DevOps toolkits

This allows automated test results to flow directly into the test management system instead of staying isolated. The result will be a more coherent view. This is how modern test management integrates automation into the quality story.

One thing teams underestimate is the effort required to make integrations actually work.

PractiTest reduces this friction through:

- Ready-to-use examples and reference implementations

- Clear documentation and integration guide

- Support channels and training resources

This matters because integration only creates value when teams actually use it. A modern test management tool should enable this.

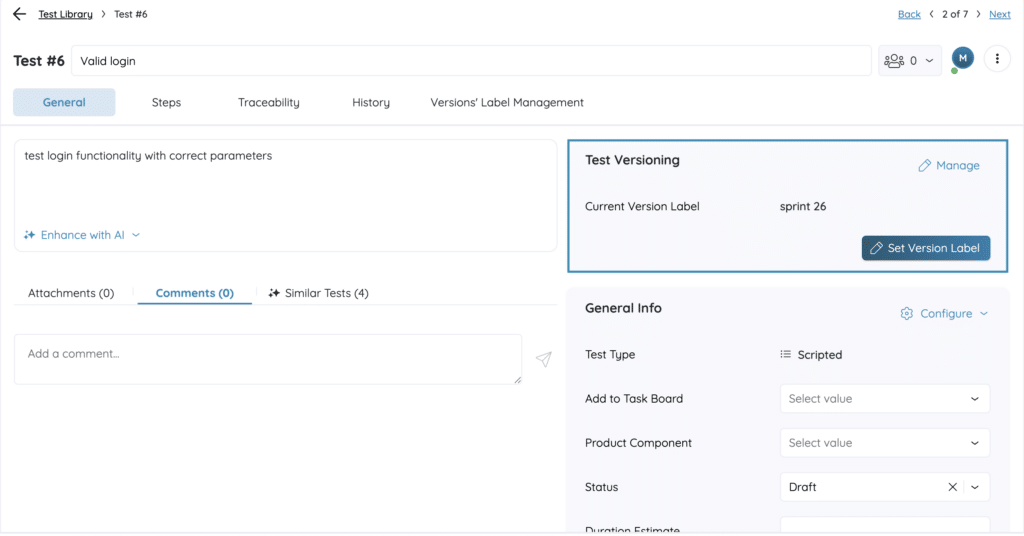

4. Test versioning support

Tests don’t stay stable. They change as the product evolves. Steps get updated. Coverage shifts. Assumptions are revised. Without versioning, these changes are difficult to track.

The problem doesn’t show up during normal execution when everything is fine. This becomes a problem during failures. Teams may suspect that a test was modified, but they lack a clear record of what changed.

PractiTest treats tests as evolving assets. It offers capabilities for:

- Assigning a new version to a test when something changes

- Tracking updates over time (Change log)

- Enabling rollback when needed

Modern test management should align testing artifacts just like how teams treat development artifacts. Just like how code is versioned carefully, tests should be too.

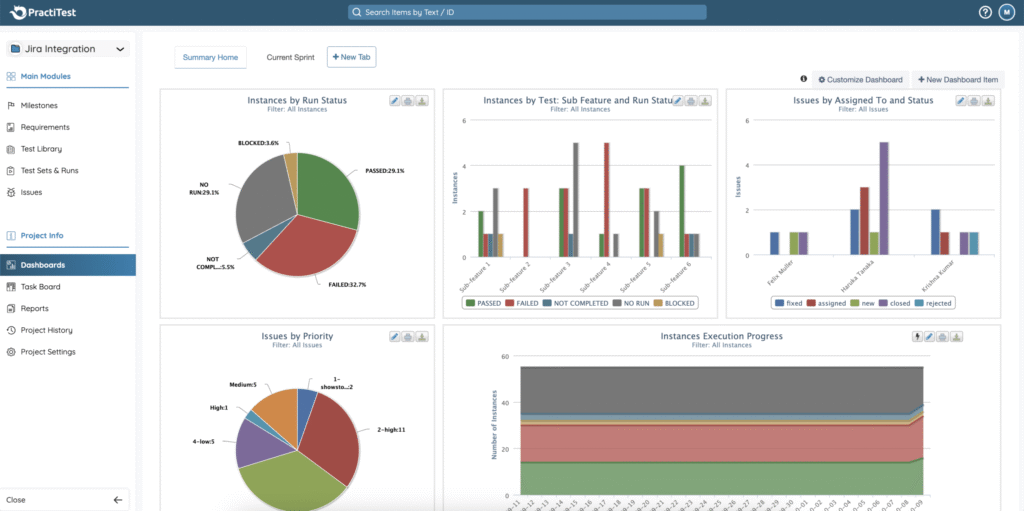

5. Powerful Test Reporting and Dashboards

Most dashboards are designed to display data. They answer what happened. They rarely help teams decide what to do next. That’s because they miss context, flexibility, and connection to real use cases. Modern test management should approach reporting as a continuous working system.

Reporting should never be limited to test execution. That limits traditional platforms from answering the real questions:

- Where is the current risk?

- Which areas are unstable?

- Which areas may require more testing?

PractiTest supports reporting across:

- Requirement use cases

- Testing use cases

- Traceability use cases

- Issues and bug trends

It also allows teams to:

- Customize dashboards for diverse stakeholders

- Schedule reports for regular updates

- Modify views based on current needs

- Edit final changes in your derived test reports

- Share dashboards with external non-project users who aren’t even PractiTest users, similar to a public preview option.

Different stakeholders need different views, and those needs change over time. If your reporting system cannot adapt to different use cases, support different operations, and connect data across the lifecycle, it will always fall short.

6. Configurability and flexibility

Processes differ across products, domains, and maturity levels. If a tool is not configurable and flexible, then eventually, it becomes something teams maintain for compliance, not something they rely on.

PractiTest allows teams to configure the system according to their needs:

- Custom test workflows aligned with team processes

- Custom issue workflows for different issue types

- Custom fields and filters for specific contexts

- Custom dashboards tailored to different roles

- Custom test step parameters to capture your unique testing context

At scale, flexibility alone is not enough. The system also needs to fit into enterprise environments. PractiTest supports:

- Single sign-on (SSO)

- Multi-factor authentication (MFA)

- SCIM-based user management

7. Onboarding support and faster time-to-value

Even the best system fails if teams cannot adopt it effectively. Most tooling problems are not technical problems; they are often adoption problems. This is fundamental as the value from a platform is directly proportional to how well teams can actually adopt and use it.

PractiTest provides structured onboarding support through:

- Dedicated help center (support matters)

- Forums and discussion groups (community matters)

- Video tutorials and text guides (onboarding matters)

- Certification programs (expertise matters)

The goal is simple: value should appear in weeks, not months.

You should also check out the actual usage ratings of tools on platforms like G2 to know how a test management system works for real users in a real context.

PractiTest G2 ratings reflect how it performs in real environments, not just in feature comparisons.

Benefits of Modern Test Management (Eg, PractiTest)

When these features come together, the difference shows up in how teams work every day. You see it in the clear discussions, faster decisions, and less confusion about the testing and product quality.

Once a modern test management tool is in place, teams can begin to:

- Focus on high-value testing instead of covering everything

- Get real-time visibility into quality without chasing updates

- Build traceability across requirements, tests, and defects

- Connect automation results with broader testing efforts

- Adapt processes without fighting the tool

- Rely on reports to guide decisions instead of just reviewing activity

Modern test management doesn’t help you test more. It helps you understand better and gain clarity faster. And that’s what matters when systems and teams grow.

If you are evaluating your current test management system, it’s important to consider these criteria so that your testing function stays future-ready.

You can also talk to them about the unified methodology that accelerates test management for modern teams.

Happy testing!